Your property scored 87% on the last brand audit. Is that good? Depends on the threshold. What drove the score? Depends on the weighting. How does it compare to other properties? Depends on the methodology.

Audit scores are simultaneously the most visible metric in hotel operations and the least understood. GMs fixate on the number without knowing how it’s calculated. QA teams track trends without understanding what drives them. Department heads receive their portion of the score without context on how their 82% affects the overall 87%.

This knowledge gap creates problems: gaming behaviors, misallocated improvement effort, and false confidence in scores that mask underlying issues.

The Anatomy of an Audit Score

Before optimizing scores, understand how they’re constructed:

Basic Calculation

At its simplest, an audit score is:

Total Points Earned ÷ Total Points Possible × 100 = Score %

A checklist with 100 items, all worth 1 point, where 87 pass: 87 ÷ 100 × 100 = 87%

This basic approach treats all items equally—a crooked picture frame matters as much as a broken fire door.

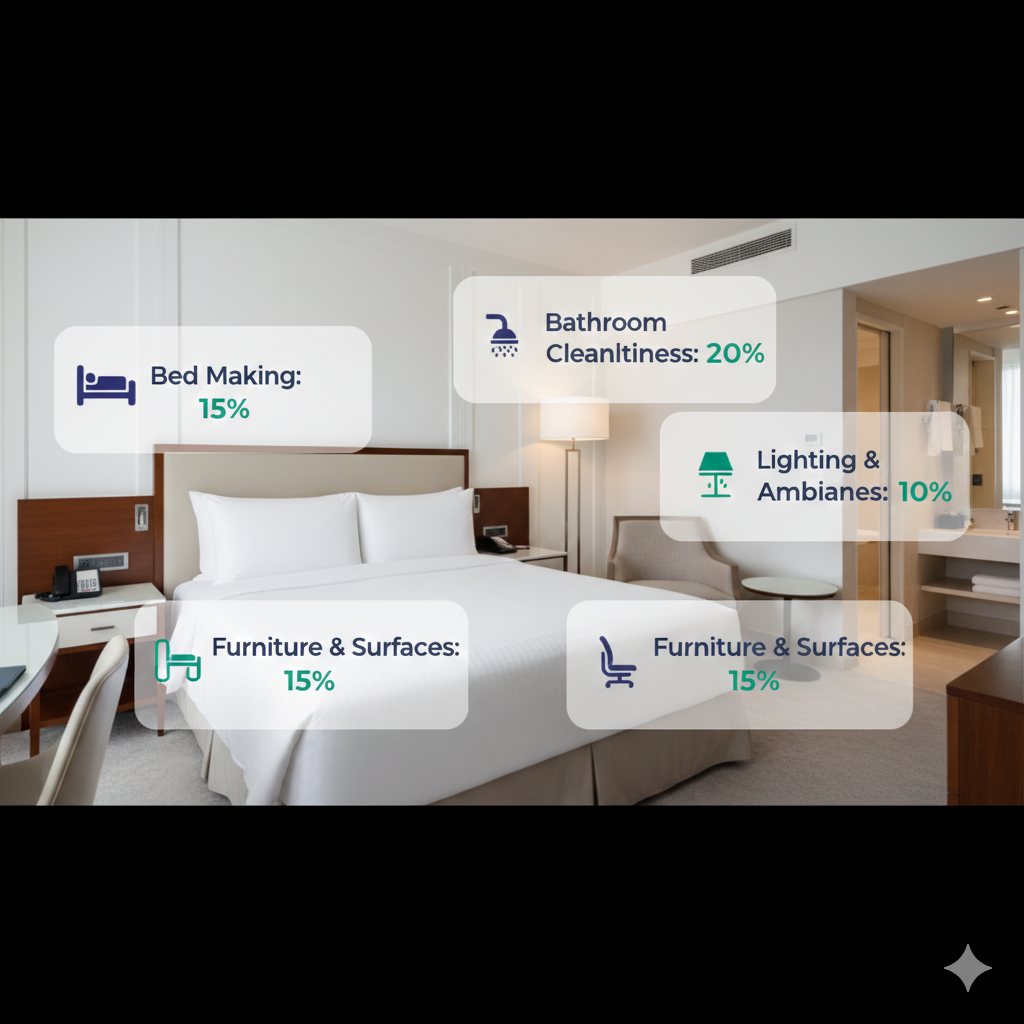

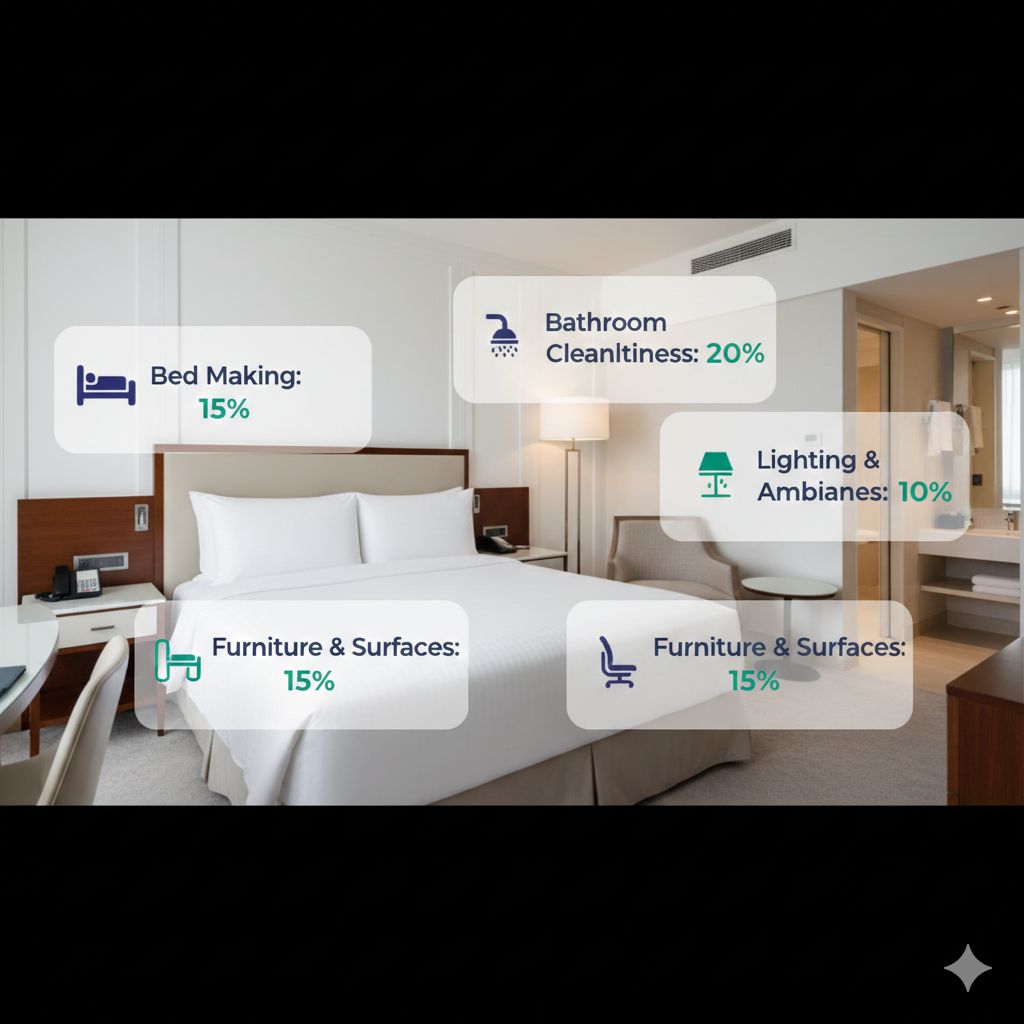

Weighted Scoring

Real audit systems apply weights to reflect relative importance:

Weighted Calculation: (Sum of Weighted Points Earned) ÷ (Sum of Weighted Points Possible) × 100

Example with category weighting:

| Category | Weight | Items | Max Points |

|---|---|---|---|

| Guest Room Cleanliness | 25% | 50 | 250 |

| Front Desk Service | 20% | 30 | 200 |

| F&B Quality | 20% | 40 | 200 |

| Safety & Security | 20% | 25 | 200 |

| Public Areas | 15% | 35 | 150 |

| Total | 100% | 180 | 1,000 |

If a property earns 875 of 1,000 possible points: 87.5%

But how those 125 lost points distribute matters enormously. Losing 100 points in Safety vs. 100 points in Public Areas carries different operational implications.

Item-Level Weighting

Beyond category weights, individual items may carry different values:

| Item | Weight | Rationale |

|---|---|---|

| Fire extinguisher present and inspected | 5 points | Safety critical |

| Bed made properly | 2 points | Guest comfort |

| Promotional materials current | 1 point | Marketing detail |

| Emergency contact information posted | 3 points | Regulatory compliance |

This granular weighting allows precision in reflecting what matters most.

Pro Tip from the Floor: “We analyzed our brand audit and found that 15 items out of 500+ accounted for 40% of our score. Those were the critical-weighted items. We built a daily checklist around just those 15 items. Score jumped 8 points in two quarters.” — Director of Quality, select-service portfolio

Industry Scoring Frameworks

Different audit frameworks use different approaches:

Forbes Travel Guide

Forbes uses up to 900 criteria for their luxury hotel evaluations, with heavy emphasis on:

- Service standards (emotional intelligence, personalization)

- Physical product quality

- Facilities and amenities

- Overall guest experience

Forbes ratings (Four-Star, Five-Star) require meeting specific thresholds across multiple categories, with particular attention to service delivery that demonstrates “anticipatory service” and genuine hospitality.

LQA (Leading Quality Assurance)

LQA standards comprise over 800 quantitative and qualitative benchmarks organized across eight main performance criteria:

- Service Excellence (238 standards) - Fundamental guest service quality

- Efficiency - Speed and accuracy of service delivery

- Emotional Intelligence - Staff ability to read and respond to guest needs

- Product Quality - Physical condition of facilities and amenities

- Food and Beverage - Culinary excellence and dining service

- Spa and Recreation - Wellness and leisure offerings

- Technical Quality - Building systems and equipment

- Sustainability - Environmental and social responsibility

LQA emphasizes emotional intelligence and personalization—elements particularly challenging to quantify but essential for luxury differentiation.

Brand-Specific Standards

Major hotel brands develop proprietary scoring systems:

Common Elements

- Weighted categories aligned with brand positioning

- Required scores for franchise compliance

- Mystery shop components for service evaluation

- Physical inspection for product standards

- Documentation review for operational compliance

Typical Thresholds

- 90%+ = Excellent (recognition/rewards)

- 80-89% = Meets expectations

- 70-79% = Needs improvement (corrective action required)

- Below 70% = Failing (remediation plan, potential consequences)

Thresholds vary significantly by brand and segment.

Understanding Scoring Methodology Choices

How a scoring system is designed affects what it measures and motivates:

Binary vs. Graduated Scoring

Binary (Pass/Fail)

- Item either meets standard (1) or doesn’t (0)

- Simple to administer

- May miss degrees of compliance

Graduated

- Scale allows partial credit (0-1-2-3 or 0-25-50-75-100%)

- Captures improvement short of perfection

- Requires more subjective judgment

Example: Bathroom Cleanliness

- Binary: Clean (pass) or Not Clean (fail)

- Graduated: Spotless (3) / Clean with minor issues (2) / Visible issues (1) / Unacceptable (0)

N/A Handling

How “Not Applicable” items are treated significantly affects scores:

Option 1: Remove from Denominator If item doesn’t apply, remove both numerator and denominator contribution.

- Prevents unfair penalty for missing items

- Requires clear N/A criteria

Option 2: Count as Automatic Pass If item doesn’t apply, award full points.

- Simpler calculation

- May inflate scores

Option 3: Count as Automatic Fail If item should be applicable but isn’t, award no points.

- Catches missing standards

- May unfairly penalize properties

Example: “Mini-bar restocked” in a property without mini-bars

- Option 1: Excluded from score

- Option 2: Full points (it’s not wrong)

- Option 3: No points (you should have mini-bars)

Critical Item Handling

Some items are non-negotiable regardless of overall score:

Critical Fail System Certain items, if failed, trigger:

- Automatic score reduction

- Immediate corrective action requirement

- Escalation regardless of overall score

- Potential automatic audit failure

Common Critical Items

- Safety violations (fire exits, equipment)

- Health code breaches

- Security gaps

- Major cleanliness failures

- Regulatory compliance items

A property might score 92% overall but fail the audit due to one critical item failure.

Pro Tip from the Floor: “We learned the hard way that critical items trump overall score. Scored 94%—our best ever—but had one blocked fire exit in the storage area. Automatic fail. The entire leadership team walked every exit the next morning and every morning since.” — Assistant GM, conference hotel

Score Calculation in Practice

Understanding how specific calculations work:

Simple Weighted Average

Category A: 20% weight, 85% score = 0.20 × 85 = 17 Category B: 30% weight, 90% score = 0.30 × 90 = 27 Category C: 25% weight, 80% score = 0.25 × 80 = 20 Category D: 25% weight, 95% score = 0.25 × 95 = 23.75

Overall Score: 17 + 27 + 20 + 23.75 = 87.75%

Nested Weighting

Categories contain sections, sections contain items:

Category: Guest Rooms (40% of total)

- Section: Bedroom (50% of category)

- Item: Bed properly made (10% of section) = 1 point

- Item: Linens fresh (15% of section) = 1 point

- … etc.

- Section: Bathroom (35% of category)

- Section: Amenities (15% of category)

Nested weighting allows precise control but requires careful balance to avoid unintended emphasis.

Threshold-Based Scoring

Some systems use thresholds rather than linear scoring:

Example: Response Time

- Under 3 minutes: 100%

- 3-5 minutes: 75%

- 5-10 minutes: 50%

- Over 10 minutes: 0%

This prevents minor variations from moving scores while penalizing meaningful deviations.

Designing Effective Scoring Systems

If you’re building or refining your audit scoring:

Alignment with Priorities

Step 1: Identify what matters most (guest experience drivers, safety requirements, brand differentiators)

Step 2: Weight categories to reflect relative importance

Step 3: Weight items within categories proportionally

Step 4: Define critical items requiring special treatment

Step 5: Validate that score changes reflect actual improvement

Avoiding Common Design Mistakes

Mistake: Equal Weighting Treating all items equally dilutes focus on what matters. Fix: Apply meaningful weights based on impact analysis.

Mistake: Too Many Critical Items If 50 items are all “critical,” nothing is critical. Fix: Limit critical items to genuine safety and compliance requirements.

Mistake: Subjective Criteria “Room feels welcoming” can’t be scored consistently. Fix: Define objective, observable criteria for each item.

Mistake: No N/A Provision Forces auditors to score items that don’t apply. Fix: Create clear N/A guidelines for each item type.

Mistake: Single Overall Score One number hides where problems actually exist. Fix: Provide category and section breakdowns.

Calibration Across Auditors

Different auditors scoring the same conditions differently undermines system validity:

Calibration Methods

- Joint audits with scoring comparison

- Photo-based scoring exercises

- Regular scoring alignment sessions

- Detailed scoring guides with examples

- Inter-rater reliability measurement

If auditor A scores a room 85% and auditor B scores the same room 78%, the scoring system isn’t working.

Score Interpretation and Communication

Raw numbers require context:

Trend Over Time

A single score is a snapshot. Trends tell the story:

- Is the property improving, declining, or stable?

- Are improvements sustained or temporary?

- Do scores drop after certain events (leadership changes, seasonal peaks)?

- How quickly do scores recover after initiatives?

Comparison Considerations

Comparing scores across properties requires caution:

Valid Comparisons

- Same audit methodology

- Same time period

- Same auditor pool (or calibrated auditors)

- Similar property types

Invalid Comparisons

- Different weighting systems

- Different N/A handling

- Pre/post methodology changes

- Different auditor standards

Score Decomposition

Help stakeholders understand what drives results:

By Category “We scored 87% overall. Guest Rooms at 91%, but F&B at 78% pulled us down.”

By Section “Within Guest Rooms, bathrooms scored 95%, but bedrooms at 85% created the gap.”

By Item “Within bedrooms, ‘dust-free surfaces’ failed in 40% of rooms.”

This decomposition guides improvement effort to highest-impact areas.

Pro Tip from the Floor: “I used to send department heads their overall section score. Now I send the three lowest-scoring items. They can’t hide behind an ‘acceptable’ 82% when they see specific failures that need fixing.” — Quality Manager, resort property

The Psychology of Scores

Scoring systems affect behavior—sometimes in unintended ways:

Gaming Behaviors

When scores carry consequences, gaming follows:

Known Audit Preparation Properties that know audit timing focus resources on audit-week performance. Solution: Unannounced audits, continuous self-auditing.

Auditor Relationship Manipulation Hospitality toward auditors hoping for favorable scoring. Solution: Auditor rotation, third-party auditing, photo evidence requirements.

Easy Item Focus Focusing improvement on easy-to-fix items rather than highest-impact items. Solution: Weight items by guest impact, not fix difficulty.

Score Inflation Over Time Auditors becoming more lenient as relationships develop. Solution: Calibration sessions, scoring drift monitoring, auditor rotation.

Threshold Effects

When scores have threshold consequences, behavior clusters around thresholds:

Example: Properties below 80% face management intervention

- Properties at 79% make extraordinary effort to cross 80%

- Properties at 85% may reduce effort since they’re “safe”

- Resources cluster around threshold, not actual improvement opportunity

Recognition vs. Penalty Systems

Penalty-focused (below threshold = consequences):

- Creates anxiety around audits

- May encourage hiding problems

- Minimum-sufficient behavior

Recognition-focused (above threshold = rewards):

- Creates aspiration

- Encourages transparency

- Continuous improvement orientation

Best systems combine both: meaningful recognition for excellence, clear accountability for failure.

Connecting Scores to Business Outcomes

Audit scores should correlate with results that matter:

Guest Satisfaction

If audit scores don’t predict guest satisfaction, something’s wrong with the audit:

- Track correlation between audit scores and review scores

- Analyze which audit categories best predict satisfaction

- Adjust weighting based on satisfaction drivers

- Investigate when high audit scores accompany low satisfaction

Financial Performance

Quality investments should pay returns:

- RevPAR relationship to quality scores

- ADR (Average Daily Rate) correlation with audit ratings

- Market share changes following score improvements

- Cost of quality (audit cost vs. savings from fewer issues)

Brand Health

For branded properties:

- Brand standard compliance enabling marketing claims

- External rating eligibility (Forbes, AAA, LQA)

- Franchise risk mitigation

- Competitive positioning

Building Your Scoring Expertise

To optimize your audit scoring approach:

Analyze Current System

- Document current methodology (weights, thresholds, N/A handling)

- Calculate theoretical maximum and minimum scores

- Identify which items contribute most to variance

- Assess auditor calibration consistency

- Evaluate correlation with guest satisfaction

Identify Improvement Opportunities

- Are weights aligned with actual priorities?

- Do critical items reflect genuine safety/compliance needs?

- Is scoring consistent across auditors?

- Do scores predict outcomes that matter?

Implement Refinements

- Test scoring changes on historical data before implementing

- Communicate methodology changes clearly

- Recalibrate auditors after methodology changes

- Track outcome correlations following changes

Educate Stakeholders

- Help department heads understand what drives their scores

- Train GMs on interpretation and communication

- Align improvement incentives with scoring mechanics

- Create transparency about methodology

Technology Requirements

Effective scoring requires capable systems:

Calculation Automation

Manual scoring calculations introduce errors. Automation ensures:

- Consistent weight application

- Accurate N/A handling

- Real-time score calculation

- Automatic aggregation across levels

Reporting Capability

Scores need context through reporting:

- Category and section breakdowns

- Trend visualization

- Property comparisons (where valid)

- Drill-down to item level

Analytics Integration

Advanced analysis capabilities:

- Correlation analysis with satisfaction

- Predictive modeling for at-risk properties

- Pattern detection across portfolio

- Auditor calibration monitoring

Ready to optimize your audit scoring methodology? HAS provides configurable weighted scoring, automated calculation, and detailed breakdown reporting designed for hospitality quality management.

Request a demo to see how leading hotels design scoring systems that drive improvement.

Related Reading:

About the Author

Orvia Team

Hotel Audit Experts

The Orvia team brings decades of combined experience in hospitality operations, quality assurance, and technology. We're passionate about helping hotels maintain exceptional standards.